I'm in San Antonio at the moment, attending the Association of Test Publishers 2018 Innovations in Testing Meeting. This morning, I attended a session on a topic I've blogged about tangentially before - can AI replace statisticians? The session today was about whether AI could replace psychometricians.

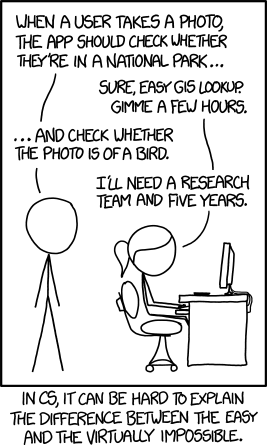

The session had four speakers, one who felt AI could (should?) replace psychometricians, one who argued why it should not, and two who discussed what applications might be useful for AI and what would still need a human. In the session, the speakers differentiated between automation (which is rules-based) and machine learning (teaching a computer to make context-dependent decision-making), or to demonstrate with XKCD:

One involves an automated process of matching the user's location to maps of national parks; the other involves a decision - one that is quite easy for a human - about whether the photo contains a bird. As technology has developed, psychometricians have been able to automate a variety of processes, such as using a computer to adapt a test based on examinee performance or creating parallel forms of a test - something that originally had to be done by a person, but now can be easily done by a computer with the right inputs.

The question of the session was whether psychometricians should move onto using/be replaced by machine learning. Many psychometricians will tell you there is an art and a science to what we do. We have guidelines we follow on, for instance, item analysis - expectations about item function across various groups or patterns of responding, or ability of the item to differentiate between low and high performances (which we call discrimination, but not in the negative, prejudicial sense of the word). But those guidelines are simply one thing we use. We may choose to keep an item with lackluster discrimination if it fulfills an important topic for the exam. We may drop an item performing well because it's been in the exam pool for a while and exposure to candidates is high. And so on.

The take-home message of the session is that automation is good - but replacing us with machine learning is problematic, because it's difficult to quantify what psychometricians do. Overall, to have machine learning take over any process, a human needs to delineate the process and outcomes. Otherwise, the computer has no outcome to which it should target. So even if a computer could take over psychometricians' job duties, it needs information to predict toward.

As machine learning and computer algorithms improve, more problems can be tackled by humans, and perhaps using machine learning for more aspects of psychometrics would allow us to focus our energy on other advances. But to get there, these topics need to be understood by humans first.

No comments:

Post a Comment