As I was out to lunch today with a friend, I heard a TV show host once again bring up the old myth that you only use 10% of your brain. In the story, she was talking about what people will look like in 10,000 years. Spoiler alert: We have bigger eyes and darker skin. She mentioned that we'd probably also be capable of using more of our brains at that point.

The origin of the "10% myth" is debated, though the most likely source is psychologist William James, who talked about how humans only use a fraction of their potential mental energy. This statement was twisted somehow into the belief that we only use a fraction of our brain - not quite the same thing.

Why does this myth prevail despite its obvious falsehood? After all, if you really sit down and think logically about all the things your brain does (beyond conscious thought), the amount of body energy the brain uses, and the significant impact of insults to the brain (such as stroke or injury), you would have to conclude that we use much more than 10% of it.

One reason this myth may prevail is potential. Or rather, the desire of people to believe they have untapped potential. Believing that you can improve in some way is incredibly motivating. On the other hand, believing that you lack any potential can result in stagnation and inaction (even when you actually can do something).

Some of the early research in the concept of learned helplessness involved putting dogs in no-win situations. The dogs were paired (yoked) with another dog who learned a task. If the learner dog behaved incorrectly, it received a shock - but so did the helpless dog. So while the learner dog received cues that would warn of an impending shock, and could change its behavior to avoid the shock, the helpless dog did not and could not. When the helpless dog was put in the learner dog's place, it did nothing to avoid the shocks. The helplessness of its prior, yoked situation carried over into the learning situation, and prevented the dog from seeing how its behavior could affect the outcome. Later research has demonstrated humans can also exhibit learned helplessness, and this concept has been used to describe the behaviors of survivors of domestic abuse.

The desire for self-improvement, an outcome of the belief in potential, drives a great deal of our behavior. For instance, I learned that one of the most popular Christmas presents this year was the FitBit, a wearable device that tracks activity. I also received a FitBit for Christmas and have so far really enjoyed having it. Not only does it tell me what I'm already doing (number of steps, hours of sleep, and so on), it gives me data that can be used for self-improvement.

With New Year's upon us, many people will probably begin a path to self-improvement through New Year's resolutions. Look for a post about that (hopefully) tomorrow!

Potentially yours,

~Sara

Thursday, December 31, 2015

Saturday, December 26, 2015

Scientific Journalism Follow-Up

As a follow-up to my last post, this story popped up on one of the Facebook pages I follow (Reviewer 2 Must Be Stopped): Four times when journalists read a scientific paper and reported the complete opposite.

Of course, I should point out that even this headlining is misleading (oh, the irony!) - though two of the examples are the opposite of what was found, the other two involved applying the findings to different situations and confusing perception with actual behavior. But I digress...

Of course, I should point out that even this headlining is misleading (oh, the irony!) - though two of the examples are the opposite of what was found, the other two involved applying the findings to different situations and confusing perception with actual behavior. But I digress...

Saturday, December 19, 2015

Peer Review Transparency and Scientific Journalism

The folks over at Nature Communications recently announced that peer reviews will have the option of being published alongside the accepted manuscript. The author(s) will get to decide whether they are published, and reviewers will have the option to remain anonymous or have their identities revealed.

The question, then, is whether peer review transparency will change any of that. Will reviewers be on better behavior if they think their comments may be made public? Perhaps, though they can still remain anonymous. One hopes it will at least encourage them to be more clear and descriptive with their comments, taking the time to show their thought process about a certain paper.

Of course, the impact of peer review transparency wouldn't just stop there. How might these public reviews be used by others? One potential issue is when news sources cover scientific findings. When I used to teach Research Methods in Psychology, one of my assignments was to find a news story about a study, then track down the original article, and make comparisons. Spoiler alert: many of the news stories ranged from being slightly misleading to completely inaccurate in their discussions of the findings. Misunderstanding of statistics, overestimating the impact of study findings, and applying findings to completely unrelated topics were just some of the issues.

Probably part of the issue is lack of scientific literacy, which is a widespread problem. There is no training for journalists covering research findings, though one wonders, if there were, whether it would look something like this:

Journalists also tend to draw upon other sources when covering research findings, such as talking to other researchers not involved with the study. It seems likely that, if transparent peer review becomes a widespread thing, we can expect to see reviewer comments in news stories about research. If the reviewer has requested to remain anonymous, there would be no way to track down the original reviewer for clarification or additional comments, or even to find out the reviewer's area of expertise. And since reviews stop once the paper has been accepted - but revisions don't necessarily stop then, instead going through the editor - comments may not be completely "up-to-date" with the contents of the paper. So there seems to be some potential for misuse of these comments.

I'm not suggesting we hide this process. In fact, my past blog posts on peer review were really about doing the opposite. I just wonder what ramifications this decision will have on publishing. It should be noted that Nature Communications is planning to assess after a year whether this undertaking was successful. But if it is successful, is this something we think we'll see more of at other journals?

Transparently yours,

~Sara

By enabling the publication of these peer review documents, we take a step towards opening up our editorial decision-making process. We hope that this will contribute to the scientific appreciation of the papers that we publish, in the same way as these results are already discussed at scientific conferences. We also hope that publication of the reviewer reports provides more credit to the work of our reviewers, as their assessment of a paper will now reach a much larger audience.I wonder, though, what impact this decision is liable to have on the peer review process. As I've blogged before (here and here), there are certainly benefits and drawbacks to peer review. Though the editor has the final decision of whether to publish a paper, peer reviewers can provide important feedback the editor may not have considered, and can be selected for their expertise in the topic under study (expertise the editor him/herself may not have). At the same time, reviewer comments are not always helpful, accurate, or even professional in their tone and wording.

The question, then, is whether peer review transparency will change any of that. Will reviewers be on better behavior if they think their comments may be made public? Perhaps, though they can still remain anonymous. One hopes it will at least encourage them to be more clear and descriptive with their comments, taking the time to show their thought process about a certain paper.

Of course, the impact of peer review transparency wouldn't just stop there. How might these public reviews be used by others? One potential issue is when news sources cover scientific findings. When I used to teach Research Methods in Psychology, one of my assignments was to find a news story about a study, then track down the original article, and make comparisons. Spoiler alert: many of the news stories ranged from being slightly misleading to completely inaccurate in their discussions of the findings. Misunderstanding of statistics, overestimating the impact of study findings, and applying findings to completely unrelated topics were just some of the issues.

Probably part of the issue is lack of scientific literacy, which is a widespread problem. There is no training for journalists covering research findings, though one wonders, if there were, whether it would look something like this:

|

| Source: SMBC Comics (one of my favorites) |

I'm not suggesting we hide this process. In fact, my past blog posts on peer review were really about doing the opposite. I just wonder what ramifications this decision will have on publishing. It should be noted that Nature Communications is planning to assess after a year whether this undertaking was successful. But if it is successful, is this something we think we'll see more of at other journals?

Transparently yours,

~Sara

Saturday, December 5, 2015

Why is Christmas Music Annoying?

We're now at the time of year where it's almost impossible to go anywhere without hearing Christmas/holiday music playing almost constantly. I'm mostly talking about pop Christmas music - multiple covers of "Santa Baby," "White Christmas," or "Rudolph the Red-Nosed Reindeer."

The problem I have with Christmas music is that there's a finite number of these songs, but a theoretically infinite number of covers. The other problem is that, because there are only a fixed number of these songs, artists try different tricks to set their versions apart from the others, tricks that can make the songs sound over-produced or quickly dated. Mind you, sometimes these tricks work and create some really interesting versions. Other times, artists write original songs - and some are actually good.

And I also want to add I certainly don’t blame the artists for releasing all these Christmas or holiday albums - I’m sure for most of them, it’s not their idea.

But if you're anything like me, you cringe just a little when you walk into a store, or flip on the radio, and hear yet another cover of [fill in the blank.] And by the second week of December, you've probably had enough.

What exactly is it about this music that can be so annoying? One possibility is what we’ll call the “familiarity breeds contempt” hypothesis. We hear these songs all the time, and know them well, even if we’ve never heard a particular cover before. They are often repetitive and infectious, like a product jingle or Katy Perry song. You may only have to hear them once, and they're in your memory forever.

Of course, while jingles may be going away (to some extent), repetition and “catchiness” are meant to serve the opposite purpose of making us like something more quickly. In fact, social psychological research suggests that the familiarity breeds contempt hypothesis often isn’t correct, and the opposite is often true. Familiarity can be comforting. Being exposed to a particular racial or ethnic group, depending on context, can increase your positive feelings toward that group (a concept known as the mere exposure effect). So this, alone, may not explain why Christmas music can be so annoying.

Another possibility is intrusion. Some radio stations and stores begin playing Christmas music quite early - I’ve seen some Christmas displays with music as early as September. At that point, we’re still coming to terms with the fact that summer is over, and accepting that we're moving into fall. Seeing/hearing elements of Christmas/holidays where they don’t belong causes annoyance, like we’re skipping directly into winter. And though winter holidays can be joyful and fun, let’s not forget what else comes with winter. In fact, my husband argues that this is why he (and others) leave Christmas decorations up past the holidays: After Christmas is over, it’s just winter. And winter blows.

Remind me to cross-stitch that onto a throw pillow sometime. :)

And because the music starts so early, combined with the above-mentioned repetition, even good Christmas songs will be played again and again and again, until you're insane.

There's also the fact that so many artists have Christmas albums, including ones where it seems completely out of character. Sure, Billy Idol may seem to be having some (tongue-in-cheek) fun with his Christmas album, but it's still a little surreal. And no matter how much Bruce Springsteen tries on "Santa Claus is Coming to Town," it's not rocking. Shouting, yes. But not rocking.

One reason I may find Christmas music particularly annoying is from working in retail. Anyone who has worked in retail knows what it’s like to be forced to listen to music one did not choose all day. My friends have heard me tell stories about the summer Titanic came out, when the player piano at JC Penney’s was programmed to play “My Heart Will Go On” every 15 minutes; my coworkers and I plotted half-jokingly about breaking in and pushing the piano down the escalators.

Fortunately, the piano had a bit more variety when it came to Christmas music. (Mind you, not a lot because, see previous comment about finite number of Christmas/holiday songs.)

As with so many things in social psychology, it's likely a combination of factors that results in the outcome. Of course, this is by no means an exhaustive list. What about you, dear readers? Do you find Christmas and/or holiday songs annoying? If so, why do you think that is? (And if you don't, what's your secret?!)

Musically yours,

~Sara

The problem I have with Christmas music is that there's a finite number of these songs, but a theoretically infinite number of covers. The other problem is that, because there are only a fixed number of these songs, artists try different tricks to set their versions apart from the others, tricks that can make the songs sound over-produced or quickly dated. Mind you, sometimes these tricks work and create some really interesting versions. Other times, artists write original songs - and some are actually good.

And I also want to add I certainly don’t blame the artists for releasing all these Christmas or holiday albums - I’m sure for most of them, it’s not their idea.

But if you're anything like me, you cringe just a little when you walk into a store, or flip on the radio, and hear yet another cover of [fill in the blank.] And by the second week of December, you've probably had enough.

What exactly is it about this music that can be so annoying? One possibility is what we’ll call the “familiarity breeds contempt” hypothesis. We hear these songs all the time, and know them well, even if we’ve never heard a particular cover before. They are often repetitive and infectious, like a product jingle or Katy Perry song. You may only have to hear them once, and they're in your memory forever.

Of course, while jingles may be going away (to some extent), repetition and “catchiness” are meant to serve the opposite purpose of making us like something more quickly. In fact, social psychological research suggests that the familiarity breeds contempt hypothesis often isn’t correct, and the opposite is often true. Familiarity can be comforting. Being exposed to a particular racial or ethnic group, depending on context, can increase your positive feelings toward that group (a concept known as the mere exposure effect). So this, alone, may not explain why Christmas music can be so annoying.

Another possibility is intrusion. Some radio stations and stores begin playing Christmas music quite early - I’ve seen some Christmas displays with music as early as September. At that point, we’re still coming to terms with the fact that summer is over, and accepting that we're moving into fall. Seeing/hearing elements of Christmas/holidays where they don’t belong causes annoyance, like we’re skipping directly into winter. And though winter holidays can be joyful and fun, let’s not forget what else comes with winter. In fact, my husband argues that this is why he (and others) leave Christmas decorations up past the holidays: After Christmas is over, it’s just winter. And winter blows.

Remind me to cross-stitch that onto a throw pillow sometime. :)

And because the music starts so early, combined with the above-mentioned repetition, even good Christmas songs will be played again and again and again, until you're insane.

There's also the fact that so many artists have Christmas albums, including ones where it seems completely out of character. Sure, Billy Idol may seem to be having some (tongue-in-cheek) fun with his Christmas album, but it's still a little surreal. And no matter how much Bruce Springsteen tries on "Santa Claus is Coming to Town," it's not rocking. Shouting, yes. But not rocking.

One reason I may find Christmas music particularly annoying is from working in retail. Anyone who has worked in retail knows what it’s like to be forced to listen to music one did not choose all day. My friends have heard me tell stories about the summer Titanic came out, when the player piano at JC Penney’s was programmed to play “My Heart Will Go On” every 15 minutes; my coworkers and I plotted half-jokingly about breaking in and pushing the piano down the escalators.

If you too have been traumatized by repeated listening to My Heart Will Go On, check this out. Trust me.

Fortunately, the piano had a bit more variety when it came to Christmas music. (Mind you, not a lot because, see previous comment about finite number of Christmas/holiday songs.)

As with so many things in social psychology, it's likely a combination of factors that results in the outcome. Of course, this is by no means an exhaustive list. What about you, dear readers? Do you find Christmas and/or holiday songs annoying? If so, why do you think that is? (And if you don't, what's your secret?!)

Musically yours,

~Sara

Thursday, December 3, 2015

Fluency, Lie Detection, and Why Jargon is (Kind of) Like a Sports Car

A recent study from researchers at Stanford (Markowitz & Hancock) suggests that scientists who use large amounts of jargon in their manuscripts might be compensating for something. Or rather, covering for exaggerated or even fictional results. To study this, they examined papers published in the life sciences over a 40 year period, and compared the writing of retracted papers to unretracted papers (you can read a press release here or the abstract of the paper published in the Journal of Language and Social Psychology here).

I've blogged about lie detection before, and that (spoiler alert) people are really bad at it. Markowitz and Hancock used a computer to do their research, which allowed them to perform powerful analyses on their data, looking for linguistic patterns that separated retracted papers from unretracted papers. For instance, retracted papers contained, on average, 60 more "jargon-like" words than unretracted papers.

Full disclosure: I have not read the original paper, so I do not know what terms they specifically defined as jargon. While a computer can overcome the shortcomings of a person in terms of lie detection (though see blog post above for a little bit about that), jargon must be defined by a person. You see, jargon is in the eye of the beholder.

For instance - and forgive me, readers, I'm about to get purposefully jargon-y - my area of expertise is a field called psychometrics, which deals with measuring concepts in people. Those measures can be done in a variety of ways: self-administered, interview, observational, etc. We create the measure, go through multiple iterations to test and improve it, then pilot it in a group of people, and analyze the results, then fit it to a model to see if it's functioning the way a good measure should. (I'm oversimplifying here. Watch out, because I'm about to get more complicated.)

My preferred psychometric measurement model is Rasch, which is a logarithmic model that transforms ordinal scales into interval scales of measurement. Some of the assumptions of Rasch are that items are unidimensional and step difficulty thresholds progress monotonically, with thresholds of at least 1.4 logits and no more than 5.0 logits. Item point-measure correlations should be non-zero and positive and item and person OUTFIT mean-squares should be less than 2.0. A non-significant log-likelihood chi-square shows good fit between the data and the Rasch model.

Depending on your background or training, that above paragraph could be: ridiculously complicated, mildly annoying, a good review, etc. My point is that in some cases jargon is unavoidable. Sure, there is another way of saying unidimensional - it means that a measure only assesses (measures) one concept (like math ability, not say, math and reading ability) - but, at the same time, we have these terms for a reason.

Several years ago, I met my favorite author, Chuck Palahniuk at a Barnes and Noble at Old Orchard - which coincidentally was the reading that got him banned from Barnes and Nobles (I should blog about that some time). He took questions from the audience, and I asked him why he used so much medical jargon in his books. He told me he did so because it lends credibility to his writing, which seems to tell the opposite story of Markowitz and Hancock's findings above.

That being said, while jargon may not necessarily mean a person is being untruthful, it can still be used as a shield in a way. It can separate a person from unknowledgeable others he or she may deem unworthy of such information (or at least, unworthy of the time it would take to explain it). Jargon can also make something seem untrustworthy and untruthful, if it makes it more difficult to understand. We call these fluency effects, something else I've blogged about before.

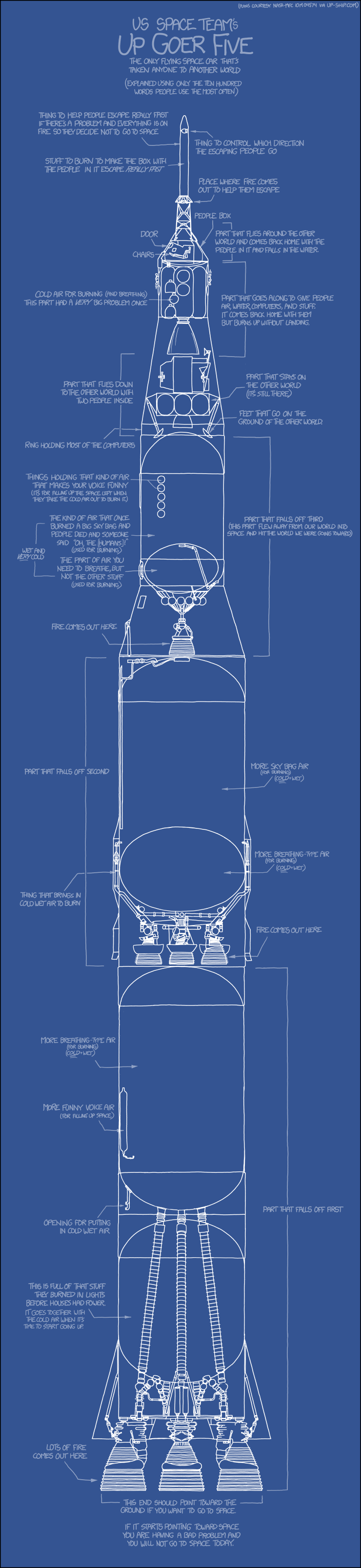

So where is the happy medium here? We have technical terms for a reason, and we should use them as appropriate. But sometimes, they might not be appropriate. As I tried to demonstrate above, it depends on audience. And on that note, I leave you with this graphic from XKCD, which describes the Saturn V rocket using common language (thanks to David over at The Daily Parker for sharing!).

I've blogged about lie detection before, and that (spoiler alert) people are really bad at it. Markowitz and Hancock used a computer to do their research, which allowed them to perform powerful analyses on their data, looking for linguistic patterns that separated retracted papers from unretracted papers. For instance, retracted papers contained, on average, 60 more "jargon-like" words than unretracted papers.

Full disclosure: I have not read the original paper, so I do not know what terms they specifically defined as jargon. While a computer can overcome the shortcomings of a person in terms of lie detection (though see blog post above for a little bit about that), jargon must be defined by a person. You see, jargon is in the eye of the beholder.

For instance - and forgive me, readers, I'm about to get purposefully jargon-y - my area of expertise is a field called psychometrics, which deals with measuring concepts in people. Those measures can be done in a variety of ways: self-administered, interview, observational, etc. We create the measure, go through multiple iterations to test and improve it, then pilot it in a group of people, and analyze the results, then fit it to a model to see if it's functioning the way a good measure should. (I'm oversimplifying here. Watch out, because I'm about to get more complicated.)

My preferred psychometric measurement model is Rasch, which is a logarithmic model that transforms ordinal scales into interval scales of measurement. Some of the assumptions of Rasch are that items are unidimensional and step difficulty thresholds progress monotonically, with thresholds of at least 1.4 logits and no more than 5.0 logits. Item point-measure correlations should be non-zero and positive and item and person OUTFIT mean-squares should be less than 2.0. A non-significant log-likelihood chi-square shows good fit between the data and the Rasch model.

Depending on your background or training, that above paragraph could be: ridiculously complicated, mildly annoying, a good review, etc. My point is that in some cases jargon is unavoidable. Sure, there is another way of saying unidimensional - it means that a measure only assesses (measures) one concept (like math ability, not say, math and reading ability) - but, at the same time, we have these terms for a reason.

Several years ago, I met my favorite author, Chuck Palahniuk at a Barnes and Noble at Old Orchard - which coincidentally was the reading that got him banned from Barnes and Nobles (I should blog about that some time). He took questions from the audience, and I asked him why he used so much medical jargon in his books. He told me he did so because it lends credibility to his writing, which seems to tell the opposite story of Markowitz and Hancock's findings above.

That being said, while jargon may not necessarily mean a person is being untruthful, it can still be used as a shield in a way. It can separate a person from unknowledgeable others he or she may deem unworthy of such information (or at least, unworthy of the time it would take to explain it). Jargon can also make something seem untrustworthy and untruthful, if it makes it more difficult to understand. We call these fluency effects, something else I've blogged about before.

So where is the happy medium here? We have technical terms for a reason, and we should use them as appropriate. But sometimes, they might not be appropriate. As I tried to demonstrate above, it depends on audience. And on that note, I leave you with this graphic from XKCD, which describes the Saturn V rocket using common language (thanks to David over at The Daily Parker for sharing!).

Simplistically yours,

~Sara

Thursday, November 26, 2015

None of Us is Dumb As All of Us Or Two Heads Are Better Than One?: Co-Authors

A recent blog post detailed the rise in frequency of co-authors, including that awkward moment when the list of co-authors is 3 times longer than the paper itself:

Having worked with co-authors, and also written papers on my own, there are certainly benefits and drawbacks either way. Anyone who has ever worked on group projects in school knows that some group members are helpful and some do little to nothing. The same is true in just about any group undertaking.

There are a variety of social psychological phenomena at play here, such as social loafing, which occurs when people exert less effort in a group than they would on their own. A related concept is diffusion of responsibility - we share responsibility with others, so that if there are more people around, we have less personal responsibility for something than we do on our own.

Groupthink can also occur, where groups actually consider less information, suppress controversial and dissenting views, and frequently make worse decisions than an individual on his/her own.

So why do we even bother working in teams at all?

In addition to co-authors becoming more and more common, and perhaps an expectation, research is becoming more complicated. With complication comes the need for multidisciplinary teams and skill-sets. Its becoming less and less likely that one person will have the necessary skills and knowledge to complete the pieces of a study, let alone for a single manuscript. As a result, collaboration is key.

In fact, groupthink is more likely to occur in a homogenous group. Diversity, not only in terms of personal characteristics like race and gender, but also knowledge and background, is incredibly important. Obviously, participants in a group also need to feel comfortable expressing dissenting opinions and critically evaluating information, something that is (usually) strongly encouraged in scientific undertaking.

Though co-authors are certainly becoming the norm, I think we can all agree that 5,154 authors is excessive. The thought of coordinating author forms for that many people gives me a headache!

Trivially yours,

~Sara

Physicists set a new record this year for number of co-authors: a 9-page report needed an extra 24 pages to list its 5,154 authors. That’s a mighty long way from science’s lone wolf origins!

Cast your eye down the contents of the first issue of the German journal, Der Naturforscher, in 1774, for example: nothing but sole authors. How did we get from there to first collaborating and sharing credit, and then the leap to hyperauthorship in the 2000s? And what does it mean for getting credit and taking responsibility?Read more here.

Having worked with co-authors, and also written papers on my own, there are certainly benefits and drawbacks either way. Anyone who has ever worked on group projects in school knows that some group members are helpful and some do little to nothing. The same is true in just about any group undertaking.

There are a variety of social psychological phenomena at play here, such as social loafing, which occurs when people exert less effort in a group than they would on their own. A related concept is diffusion of responsibility - we share responsibility with others, so that if there are more people around, we have less personal responsibility for something than we do on our own.

Groupthink can also occur, where groups actually consider less information, suppress controversial and dissenting views, and frequently make worse decisions than an individual on his/her own.

So why do we even bother working in teams at all?

In addition to co-authors becoming more and more common, and perhaps an expectation, research is becoming more complicated. With complication comes the need for multidisciplinary teams and skill-sets. Its becoming less and less likely that one person will have the necessary skills and knowledge to complete the pieces of a study, let alone for a single manuscript. As a result, collaboration is key.

In fact, groupthink is more likely to occur in a homogenous group. Diversity, not only in terms of personal characteristics like race and gender, but also knowledge and background, is incredibly important. Obviously, participants in a group also need to feel comfortable expressing dissenting opinions and critically evaluating information, something that is (usually) strongly encouraged in scientific undertaking.

Though co-authors are certainly becoming the norm, I think we can all agree that 5,154 authors is excessive. The thought of coordinating author forms for that many people gives me a headache!

Trivially yours,

~Sara

Tuesday, November 17, 2015

Monday, November 9, 2015

Facial Composites and the Power of Averages

I've previously blogged about facial asymmetry and attractiveness. Research in this area often works with facial composites - photos that combine facial elements of multiple faces - and found that composites are often viewed as more attractive. This is because composites average across various (often asymmetrical) features.

I saw this post on Facebook today and thought it made a nice follow-up to my post: composite faces using celebrities. Enjoy!

Still asymmetrically yours,

~Sara

I saw this post on Facebook today and thought it made a nice follow-up to my post: composite faces using celebrities. Enjoy!

Still asymmetrically yours,

~Sara

Sunday, November 8, 2015

The Importance of Scientific Literacy

The next presidential election is a little less than a year away, but we're already hearing from many presidential hopefuls, including a few we wish would go away. One who springs to mind is Ben Carson.

I've been accused of disliking Ben Carson because of his desire to do away with the Department of Veterans Affairs. (Check out a response from some of the major Veteran Service Organization here.)

But my bigger issue is that he has demonstrated a poor understanding of science multiple times. Such as when he said vaccines are important (and thankfully shooting down the autism and vaccines claim) but should be administered in smaller doses, and the ones that don't prevent death or disability should be discontinued (as Forbes asks, which ones are those? Because preventing death or disability is kind of what they're meant to do).

Or when he said the theory of evolution was "encouraged" by Satan.

Or that the pyramids are grain silos.

These are all issues that countless scientists, with years of formal education, have spent their careers studying. I'm certainly not saying we should trust them simply because they have years of formal education. That would be a little like believing anything Ben Carson says because he has an MD.

But, even though I'm not a virologist, or biologist, or archaeologist, I can examine what these experts have to say and come to my own conclusions about whether I think they're accurate based on the methods used in the research.

That's because of an important concept called scientific literacy:

Or how about this figure shown during a Congressional hearing about funding for Planned Parenthood, which highlights a related concept of numerical literacy (or numeracy)?

A figure that, based on the numbers associated with the two trend lines, should look more like this:

Or even a recent analysis of Southwestern Airlines's claim of having the lowest fares - read more at my friend David's blog here.

The big thing we can do is encourage a healthy level of skepticism. Obviously, continuing to provide strong science and math education is incredibly important when preparing children to become voting citizens. But simply getting people to think twice about any "scientific" claim they hear would be a step in the right direction.

~Scientifically yours,

Sara

I've been accused of disliking Ben Carson because of his desire to do away with the Department of Veterans Affairs. (Check out a response from some of the major Veteran Service Organization here.)

But my bigger issue is that he has demonstrated a poor understanding of science multiple times. Such as when he said vaccines are important (and thankfully shooting down the autism and vaccines claim) but should be administered in smaller doses, and the ones that don't prevent death or disability should be discontinued (as Forbes asks, which ones are those? Because preventing death or disability is kind of what they're meant to do).

Or when he said the theory of evolution was "encouraged" by Satan.

Or that the pyramids are grain silos.

These are all issues that countless scientists, with years of formal education, have spent their careers studying. I'm certainly not saying we should trust them simply because they have years of formal education. That would be a little like believing anything Ben Carson says because he has an MD.

But, even though I'm not a virologist, or biologist, or archaeologist, I can examine what these experts have to say and come to my own conclusions about whether I think they're accurate based on the methods used in the research.

That's because of an important concept called scientific literacy:

Scientific literacy is the knowledge and understanding of scientific concepts and processes required for personal decision making, participation in civic and cultural affairs, and economic productivity. It also includes specific types of abilities...

Scientific literacy means that a person can ask, find, or determine answers to questions derived from curiosity about everyday experiences. It means that a person has the ability to describe, explain, and predict natural phenomena. Scientific literacy entails being able to read with understanding articles about science in the popular press and to engage in social conversation about the validity of the conclusions. Scientific literacy implies that a person can identify scientific issues underlying national and local decisions and express positions that are scientifically and technologically informed. A literate citizen should be able to evaluate the quality of scientific information on the basis of its source and the methods used to generate it. Scientific literacy also implies the capacity to pose and evaluate arguments based on evidence and to apply conclusions from such arguments appropriately. (from the National Science Education Standards)As the quote above says, you're constantly bombarded with claims in the popular media. Such as the recent story about how eating bacon increases your risk of colon cancer by 18%. But an examination of this research shows two things: 1) they found this increased risk among people who eat about 2 strips of bacon every day, and 2) the absolute risk of colon cancer without eating bacon at that level is about 5%, and with that level of consumption is about 6% (more here). In fact, this story had a great outcome. People heard the claim, were skeptical, and looked into it.

Or how about this figure shown during a Congressional hearing about funding for Planned Parenthood, which highlights a related concept of numerical literacy (or numeracy)?

A figure that, based on the numbers associated with the two trend lines, should look more like this:

|

| More here |

The big thing we can do is encourage a healthy level of skepticism. Obviously, continuing to provide strong science and math education is incredibly important when preparing children to become voting citizens. But simply getting people to think twice about any "scientific" claim they hear would be a step in the right direction.

~Scientifically yours,

Sara

Monday, October 19, 2015

The Importance of Context, Confirmation Biases, and the Fundamental Attribution Error

Recently, a photo of a group of young women taking selfies at a ball game went viral. Not only did this become an online joke, it was used by some as more evidence of the younger generation as self-absorbed and vain.

However, a recent post on medium.com provided some important context for this picture. I won't repeat everything from the post here - you should definitely check it out at the link above - but in short, the girls, and everyone else in the audience, were asked to take selfies. They were just participating. After the public shaming of these young women, they were offered free tickets as an apology, which they instead asked to be donated to an organization that supports victims of domestic violence.

So that picture above? That's what classy ladies who also know how to have fun look like.

Why were people so quick to believe that these young women were acting out of vanity, not participation? One potential reason is because it confirms a stereotype we have about young people. This phenomenon is aptly called "confirmation bias" - we look for, and remember, evidence that confirms our expectations, and ignore or forget information that disconfirms our expectations. This is one reason that anecdotes are not useful evidence if you're trying to get at the truth of a phenomenon. You might be able to remember, for example, 20 times you observed women drive poorly, but fail to remember the 20 times you observed men drive poorly. (And as I've blogged before, memory can be very biased.)

Another reason, which could occur in concert with confirmation bias, is the fundamental attribution error (or FAE, because damn, is that a really long name). FAE is based on in-group-out-group theories; our in-group is made up of people like ourselves and our out-group is made up of everyone else. How we define that group at a given moment depends on context - it could be gender, race, age group, education level - and research suggests that we begin grouping people as "like us" and "unlike us" based on some pretty arbitrary information: what team you were randomly assigned to, whether someone picked the same painting from two options, etc.

For those who love learning psychological terms toimpress your friends expand your knowledge, we call this latter concept the "minimal group paradigm."

FAE basically states that we look for evidence to confirm that people in our in-group are good, and unlike people in our out-group. If we observe someone in our in-group doing something positive, we attribute that to their personality - they're just good people. If we observe someone in our in-group doing something negative, we attribute that to the situation - something made them do that.

And we do the opposite with people in our out-group. When they do something positive, we attribute it to the situation, and when we observe them do something negative, we attribute it to their personality.

When we saw these young women taking selfies at the ball game, many of us attributed it to their personalities - they're vain. No one stopped to consider that maybe there was a situational explanation. Then medium.com came along and demonstrated that there was a situational explanation. And we allfelt like assholes said, "if I'd known that information when I saw the picture, I wouldn't have been so quick to judge." Because we're good people. It was the situation. (See how pervasive the FAE is? Hey, I have a PhD in social psychology and I did the same thing.)

Contextually yours,

~Sara

However, a recent post on medium.com provided some important context for this picture. I won't repeat everything from the post here - you should definitely check it out at the link above - but in short, the girls, and everyone else in the audience, were asked to take selfies. They were just participating. After the public shaming of these young women, they were offered free tickets as an apology, which they instead asked to be donated to an organization that supports victims of domestic violence.

So that picture above? That's what classy ladies who also know how to have fun look like.

Why were people so quick to believe that these young women were acting out of vanity, not participation? One potential reason is because it confirms a stereotype we have about young people. This phenomenon is aptly called "confirmation bias" - we look for, and remember, evidence that confirms our expectations, and ignore or forget information that disconfirms our expectations. This is one reason that anecdotes are not useful evidence if you're trying to get at the truth of a phenomenon. You might be able to remember, for example, 20 times you observed women drive poorly, but fail to remember the 20 times you observed men drive poorly. (And as I've blogged before, memory can be very biased.)

Another reason, which could occur in concert with confirmation bias, is the fundamental attribution error (or FAE, because damn, is that a really long name). FAE is based on in-group-out-group theories; our in-group is made up of people like ourselves and our out-group is made up of everyone else. How we define that group at a given moment depends on context - it could be gender, race, age group, education level - and research suggests that we begin grouping people as "like us" and "unlike us" based on some pretty arbitrary information: what team you were randomly assigned to, whether someone picked the same painting from two options, etc.

For those who love learning psychological terms to

FAE basically states that we look for evidence to confirm that people in our in-group are good, and unlike people in our out-group. If we observe someone in our in-group doing something positive, we attribute that to their personality - they're just good people. If we observe someone in our in-group doing something negative, we attribute that to the situation - something made them do that.

And we do the opposite with people in our out-group. When they do something positive, we attribute it to the situation, and when we observe them do something negative, we attribute it to their personality.

When we saw these young women taking selfies at the ball game, many of us attributed it to their personalities - they're vain. No one stopped to consider that maybe there was a situational explanation. Then medium.com came along and demonstrated that there was a situational explanation. And we all

Contextually yours,

~Sara

Wednesday, October 7, 2015

Totally Superfluous Movie Review: The Craft

Because it’s October, and because I was talking about this movie with friends recently, I decided to watch The Craft again tonight.

I’ve seen this movie many times – it spawned a generation of young women fascinated with the occult, and has a great soundtrack, including a great cover of “Dangerous Type” by one of my favorite bands, Letters to Cleo.

The thing that struck me about this movie upon watching it again tonight is the underlying theme of belonging. On its surface, the theme seems to be about control – fascination with magic and other supernatural forces stems, at least in part, from a desire to feel in control of the world around us. With magic, we can punish the people who hurt us, make our crush love us, and change our situation, all things that happen in the movie.

But the deeper issue is the need to belong, something we all experience, but is often the focus of our lives as children and teenagers. Sarah (Robin Tunney) first befriends Nancy (Fairuza Balk), Bonnie (Neve Campbell), and Rochelle (Rachel True) because she is a new student and has no friends in a new town. She also meets Chris and falls for him, but is devastated when he rejects her and spreads nasty rumors about her. Her focus then becomes getting Chris to accept (love) her.

Bonnie wants to get rid of her scars, so she can feel normal. Rochelle is stifled socially and on her swim team because of a hateful bully (played by the ever-awesome Christine Taylor, who can pull off everything from plucky love interest to racist bully), and wants to make the bullying stop. Finally, Nancy wishes to escape poverty and an abusive stepfather, because even though she claims she doesn’t care what others think of her, she does (including Chris).

Of course, in trying to belong and feel accepted, they become the monsters they fought so hard against. This is a common theme in horror movies (look for a blog post on that later!). Sarah realizes first that they’ve taken things way too far, unfortunately a little too late to help Chris. When she tries to stop Nancy, Bonnie, and Rochelle from hurting people, they lash out at her. Only through being accepted by (and accepting) Lirio is Sarah able to make things right - or perhaps more symbolically, only through Sarah accepting herself is she able to find the strength to make things right.

If you’re looking for education on Pagan religions, obviously this is not the place to look. Probably one of the most common misconceptions of these religions is that the purpose is to do magic. But the Pagans were farmers, and their religion and ceremonies were built around the harvest and nature. Magic was considered one of many natural forces they sought to understand and, when possible, control.

But if you’re looking for an allegory of adolescence, and the price of belonging (at least in certain ways), check out The Craft!

Craftily yours,

~Sara

I’ve seen this movie many times – it spawned a generation of young women fascinated with the occult, and has a great soundtrack, including a great cover of “Dangerous Type” by one of my favorite bands, Letters to Cleo.

The thing that struck me about this movie upon watching it again tonight is the underlying theme of belonging. On its surface, the theme seems to be about control – fascination with magic and other supernatural forces stems, at least in part, from a desire to feel in control of the world around us. With magic, we can punish the people who hurt us, make our crush love us, and change our situation, all things that happen in the movie.

But the deeper issue is the need to belong, something we all experience, but is often the focus of our lives as children and teenagers. Sarah (Robin Tunney) first befriends Nancy (Fairuza Balk), Bonnie (Neve Campbell), and Rochelle (Rachel True) because she is a new student and has no friends in a new town. She also meets Chris and falls for him, but is devastated when he rejects her and spreads nasty rumors about her. Her focus then becomes getting Chris to accept (love) her.

Bonnie wants to get rid of her scars, so she can feel normal. Rochelle is stifled socially and on her swim team because of a hateful bully (played by the ever-awesome Christine Taylor, who can pull off everything from plucky love interest to racist bully), and wants to make the bullying stop. Finally, Nancy wishes to escape poverty and an abusive stepfather, because even though she claims she doesn’t care what others think of her, she does (including Chris).

Of course, in trying to belong and feel accepted, they become the monsters they fought so hard against. This is a common theme in horror movies (look for a blog post on that later!). Sarah realizes first that they’ve taken things way too far, unfortunately a little too late to help Chris. When she tries to stop Nancy, Bonnie, and Rochelle from hurting people, they lash out at her. Only through being accepted by (and accepting) Lirio is Sarah able to make things right - or perhaps more symbolically, only through Sarah accepting herself is she able to find the strength to make things right.

If you’re looking for education on Pagan religions, obviously this is not the place to look. Probably one of the most common misconceptions of these religions is that the purpose is to do magic. But the Pagans were farmers, and their religion and ceremonies were built around the harvest and nature. Magic was considered one of many natural forces they sought to understand and, when possible, control.

But if you’re looking for an allegory of adolescence, and the price of belonging (at least in certain ways), check out The Craft!

Craftily yours,

~Sara

Tuesday, September 29, 2015

Totally Superfluous Movie Review: The Taking of Deborah Logan

Last year, during my horror movie binge, I watched a newish movie on Netflix, "The Taking of Deborah Logan." The movie had an interesting premise: a elderly woman with Alzheimer's becomes the subject of a young student's film project, but there is reason to believe that she is experiencing something far worse.

In fact, the poster immediately sets the stage that something very bad is going to happen... or has already happened.

The movie tries to make itself look like a documentary, similar to the Blair Witch Project. The group of filmmakers film themselves and behind the scenes because, well, they're filmmakers. They like cameras. Of course, it's obvious early on that the movie is fictional:

One, because of a recognizable actress in the role of Deborah's daughter.

Two, because the poster highlights the producers' other, fictional movies.

And three, because just like in Blair Witch, you reach a point where you begin to wonder why the characters continue to film or hold the camera, rather than drop and run, or at least put it down to argue with each other. The level of commitment to recording every moment is not completely believable, no matter how devoted the filmmakers are to their craft.

The movie packs lots of creepy scenes and a few legitimate scares. The movie is well-paced, well-acted, and at times, truly terrifying. That's why it pains me to say I really didn't like it.

Or rather, the psychologist in me, with an awareness of the history of treatment of mental and neurological illness, didn't like it. I'm probably not giving away a huge spoiler when I say that Deborah is possessed by something evil. In fact, this becomes a suspicion very early on with some strong evidence to support it. The evidence simply gets stronger and scarier as the movie goes on.

For centuries, people with mental and neurological illnesses were accused of being possessed. They were exorcised, treated cruelly, and had holes drilled in their heads to "let the evil spirits out." Some with an awareness of what was happening to them may even have come to believe they were possessed. Though modern medicine has certainly moved past this point to recognize legitimate illness, there are still somenutjobs people who believe that illness is demonic possession. And even among people with more modern understanding of illness, many conditions are still treated as unimportant or something to be ashamed of.

This is why it really bothered me that this movie seemed to dismiss that history for cheap scares. I'm sure the filmmakers did not intend to suggest mental and neurological illnesses aren't "real." But there are many people who fail to learn from history - some are doomed to repeat it, some are doomed to make thoughtless mistakes that appear malicious. An extreme, recent example:

Overall, I'm afraid I can't give The Taking of Deborah Logan high marks, or a recommendation to my fellow horror movie lovers. There are many things to like about the movie. But the psychologist in me just can't get past this issue.

Thoughtfully yours,

~Sara

In fact, the poster immediately sets the stage that something very bad is going to happen... or has already happened.

The movie tries to make itself look like a documentary, similar to the Blair Witch Project. The group of filmmakers film themselves and behind the scenes because, well, they're filmmakers. They like cameras. Of course, it's obvious early on that the movie is fictional:

One, because of a recognizable actress in the role of Deborah's daughter.

Two, because the poster highlights the producers' other, fictional movies.

And three, because just like in Blair Witch, you reach a point where you begin to wonder why the characters continue to film or hold the camera, rather than drop and run, or at least put it down to argue with each other. The level of commitment to recording every moment is not completely believable, no matter how devoted the filmmakers are to their craft.

The movie packs lots of creepy scenes and a few legitimate scares. The movie is well-paced, well-acted, and at times, truly terrifying. That's why it pains me to say I really didn't like it.

Or rather, the psychologist in me, with an awareness of the history of treatment of mental and neurological illness, didn't like it. I'm probably not giving away a huge spoiler when I say that Deborah is possessed by something evil. In fact, this becomes a suspicion very early on with some strong evidence to support it. The evidence simply gets stronger and scarier as the movie goes on.

For centuries, people with mental and neurological illnesses were accused of being possessed. They were exorcised, treated cruelly, and had holes drilled in their heads to "let the evil spirits out." Some with an awareness of what was happening to them may even have come to believe they were possessed. Though modern medicine has certainly moved past this point to recognize legitimate illness, there are still some

This is why it really bothered me that this movie seemed to dismiss that history for cheap scares. I'm sure the filmmakers did not intend to suggest mental and neurological illnesses aren't "real." But there are many people who fail to learn from history - some are doomed to repeat it, some are doomed to make thoughtless mistakes that appear malicious. An extreme, recent example:

Overall, I'm afraid I can't give The Taking of Deborah Logan high marks, or a recommendation to my fellow horror movie lovers. There are many things to like about the movie. But the psychologist in me just can't get past this issue.

Thoughtfully yours,

~Sara

Tuesday, September 15, 2015

The Comfort of Home, The Thrill of the Unknown

I've gotten out of the habit of blogging regularly once again. I recently went out of town for work, and came back to have a lot of catching up to do on various work tasks. Though I loved the trip and had a great time, by the end, I was thrilled to be back home.

I started thinking about this interesting juxtaposition - how people can love traveling and seeing new places but also love the comfort of the familiar. And what I think it comes down to, at least in part, is cognitive resources.

Social psychologists Susan Fiske and Shelley Taylor postulated that people are cognitive misers; they are thrifty with their cognitive resources, and avoid using them if they can coast through a situation on mental autopilot.

I started thinking about this interesting juxtaposition - how people can love traveling and seeing new places but also love the comfort of the familiar. And what I think it comes down to, at least in part, is cognitive resources.

Social psychologists Susan Fiske and Shelley Taylor postulated that people are cognitive misers; they are thrifty with their cognitive resources, and avoid using them if they can coast through a situation on mental autopilot.

|

This is certainly true, but I think there's a little more to it. We're cognitive neophiliacs; new things get our attention and encourage us to use some of our cognitive resources, to a point. But when new situations become overwhelming or threaten to use more resources than we have or are willing to give, we tune out. Things that are familiar take little to no mental energy, which is why they could be comforting at some times and stifling at others.

In fact, exerting mental energy depends on two things: ability, a trait, and motivation, a state. Some people begin with higher ability than others, but if motivation is low, it might be difficult to tell the difference. Further, some people have a high motivation to use mental resources - we refer to them as being high in "need for cognition." They tend to also have high ability, and are the true cognitive neophiliacs. They love thinking through situations and ideas, and thrive on learning new things. But even people with low need for cognition are willing and able to use mental resources at some point in time.

When you travel somewhere new, you're bombarded with new sights and sounds, maybe even a different language than you're used to hearing. You have to learn new paths to take to get where you're going, because your cognitive maps aren't very useful in a new location. And I'm not just talking about navigating to attractions, since many people use GPS or maps to get there, but even the simple act of waking up in the morning and getting ready requires a mental map that differs from your usual routine. If you're able and motivated, you will likely thrive in this new situation.

But mental resources are limited, and we all reach a point where we have no more left to give. It just takes some people longer to get there than others. It's at this point that we are attracted to the familiar, where we can once again rely on our mental autopilot (good old Otto).

I've been home for almost a week, and honestly, I'm starting to get the travel bug again. But it's nice to return to familiar sights, sounds, and people. So instead, I'm thinking of directing my replenished cognitive resources toward learning something new and improving in my daily activities. Hopefully there will be another trip in my near future, though.

Cognitively yours,

~Sara

Saturday, August 22, 2015

Peer Into the World of Academic Publishing: Part II

When last I blogged, I was giving an overview of peer review. This time, I'm back with some examples from previous reviews I've received.

Peer review is a little like grading. Some teachers/professors give really clear, helpful feedback and offer strong explanations for why you earned the grade you did. And, well, some teachers/professors don't.

However, in this case, the stakes are higher, because journals have (often physical) limitations on how many papers they can actually select. Even as more journals become online-only and open source (free to read), there are still usually limitations on how many articles they will include within a given volume (to refer to the publishing year) and issue (the individual copies of the journal published throughout the year - for instance, a monthly journal would have 12 issues).

Peer review helps to keep that field of eligible articles lower. Unfortunately, there is little training for reviewers and reviewers themselves rarely ever get feedback on the reviews they write. So the nonsensical stay nonsensical, the terse stay terse, and the cruel stay cruel. Hopefully this also means that the good stay good.

But this may not be the case. Peer review brings with it few perks. It doesn't pay. It doesn't (always) increase your chance at getting published in a journal. It can be time-consuming and thankless.

Why then do we do it? It is seen as "service" to the profession, something people are strongly encouraged to do. People usually include journals they've reviewed for on their C.V. It can lead to bigger and better things, like being on the editorial board of a journal, which brings many perks. And it can be rewarding in other ways.

Part of my motivation for reviewing is that it feels good to give feedback to others, with the goal of helping them improve. And, though I've received some awful reviews, I've also received some really amazing ones - reviews that weren't always positive, but were always helpful. Reviewing is a way to give back.

Here are my thoughts on peer review - what is is and what it is not:

1. It can be very subjective, but that doesn't necessarily mean reviewers are wrong.

Reviews can be extremely vague and contain language like "I didn't like..." or "X didn't work for me..." It can be frustrating, when you're presenting information about a scientific study, providing detail on aspects of the study so people can objectively critique, to hear mere opinions with no concrete examples. Still, that doesn't mean the reviewer is wrong. For instance:

And then there's this great example from Shit My Reviewers Say demonstrating bias against an entire discipline:

4. It can make you laugh or cry - choose to laugh.

I think this is just good advice for life. If this is part of your job, you can't let every bad review get you down. It helps to find some humor in the situation. For instance, as I work on my revision, I use the Word comments feature to show where a change is needed based on a reviewer comment. I occasionally put snarky comments alongside. Obviously those are deleted before I resubmit. But they make me laugh so I keep doing it.

5. Some of the feedback will be helpful and some will be completely ridiculous.

I tried to find my very first peer review and sadly, couldn't locate it. But one comment was so ridiculous, I remember it (maybe not verbatim). The reviewer stated that my study contained serious methodological flaws. Said reviewer could not readily identify these flaws but knew they were there because I "did not confirm my hypothesis."

Okay, to repeat something I tell my Research Methods students again and again: You do not critique the validity of a study based on its findings. You critique based on its methods.

As for the part that implies a good study should confirm its hypothesis - yeah, I'm not even going into what's wrong with that. So I'll just sum up what would ultimately end up being a multi-part blog post rant with some choice phrases: scientific method, type I error, the tentative nature of scientific findings.

6. There will be times, when reading a review, that you will wonder, "Did they even read the **** paper?!"

And you know what, maybe they didn't. Or maybe they skimmed it. Or maybe they did actually read it, and weren't able to absorb this information after one read. Because most of your readers if the paper is published will probably also just read it once and absorb what they can from it, feedback from this kind of reviewer is actually very helpful.

7. It can make you a better writer, if you let it.

When you're writing or talking about something you know a lot about, you sometimes take certain information for granted. Most of the time, when we communicate about a study we're conducting, it is with other people involved in the study. But when you write up your findings for publication, you're addressing a new audience: people who know nothing about what you did and who may know very little about a topic.

Receiving peer reviews help highlight that information you may have taken for granted. Over time, with experience at revising based on reviews, you get better about thinking of what information your reader would want to know. This makes you a better writer, at least in terms of scientific writing.

8. Any given review is just one person's opinion.

So don't let bad reviews get you down. At the end of the day, it's just one person's opinion. My favorite example:

This comment was about one of my most cited papers. I receive emails 1-2 times a month and requests on Research Gate 2-3 times a month asking for a copy. I like to think its contribution is more than just small.

But this goes both ways - any good review is also just one person's opinion. You shouldn't let bad reviews eat away at you. But at the same time, you can't simply ignore bad reviews and listen only to the good ones, because the good ones are opinions too, opinions that might not be shared by very many people. Take what you can from both.

Peer review is a little like grading. Some teachers/professors give really clear, helpful feedback and offer strong explanations for why you earned the grade you did. And, well, some teachers/professors don't.

However, in this case, the stakes are higher, because journals have (often physical) limitations on how many papers they can actually select. Even as more journals become online-only and open source (free to read), there are still usually limitations on how many articles they will include within a given volume (to refer to the publishing year) and issue (the individual copies of the journal published throughout the year - for instance, a monthly journal would have 12 issues).

Peer review helps to keep that field of eligible articles lower. Unfortunately, there is little training for reviewers and reviewers themselves rarely ever get feedback on the reviews they write. So the nonsensical stay nonsensical, the terse stay terse, and the cruel stay cruel. Hopefully this also means that the good stay good.

But this may not be the case. Peer review brings with it few perks. It doesn't pay. It doesn't (always) increase your chance at getting published in a journal. It can be time-consuming and thankless.

Why then do we do it? It is seen as "service" to the profession, something people are strongly encouraged to do. People usually include journals they've reviewed for on their C.V. It can lead to bigger and better things, like being on the editorial board of a journal, which brings many perks. And it can be rewarding in other ways.

Part of my motivation for reviewing is that it feels good to give feedback to others, with the goal of helping them improve. And, though I've received some awful reviews, I've also received some really amazing ones - reviews that weren't always positive, but were always helpful. Reviewing is a way to give back.

Here are my thoughts on peer review - what is is and what it is not:

1. It can be very subjective, but that doesn't necessarily mean reviewers are wrong.

Reviews can be extremely vague and contain language like "I didn't like..." or "X didn't work for me..." It can be frustrating, when you're presenting information about a scientific study, providing detail on aspects of the study so people can objectively critique, to hear mere opinions with no concrete examples. Still, that doesn't mean the reviewer is wrong. For instance:

When I first read this, I was really frustrated. It gave me no guidance on how to write the paper differently. The introduction was written in a [background on a topic, hypothesis on that topic] format, so I didn't understand how a) the intro didn't lead to the hypotheses and b) to rewrite so that the leading was better. It didn't tell me what variables were unclear, or perhaps on the flip side, what variables were clear, so I could focus on the remaining ones. And the other reviewers had no issues with the introduction.

But after I got over my frustration and started reading the paper, I realized that I could use this feedback to improve. It's easy to think that reviewers are overly critical and to ignore their feedback. Actually digging in and getting what you can from it is challenging, but it can be very rewarding. So I reworked the intro, and you know what? The paper was much better as a result. It was accepted, and is now one of my most cited papers.

2. Reviews can range from inspiring to demoralizing, sometimes both in a single review.

My favorite example of this is the highly positive review that ends with "reject":

Once again, it's easy to get annoyed. But keep in mind that not every paper fits a particular journal. You might have written an excellent qualitative paper, but if you submitted it to a journal that only publishes quantitative work, you're likely to get a review just like the one above.

3. It can expose reviewer bias about topics, methods, analysis approaches, and even academic disciplines.

As a mixed methods researcher, I do a lot of work that is either partially or fully qualitative (that is, results not expressed in numbers, but narratives, that use methods like interviews and focus groups). Without fail, I seem to get one reviewer who does not get qualitative research, and does not like qualitative research. Here are some of my favorite examples of such:

Once again, it's easy to get annoyed. But keep in mind that not every paper fits a particular journal. You might have written an excellent qualitative paper, but if you submitted it to a journal that only publishes quantitative work, you're likely to get a review just like the one above.

3. It can expose reviewer bias about topics, methods, analysis approaches, and even academic disciplines.

As a mixed methods researcher, I do a lot of work that is either partially or fully qualitative (that is, results not expressed in numbers, but narratives, that use methods like interviews and focus groups). Without fail, I seem to get one reviewer who does not get qualitative research, and does not like qualitative research. Here are some of my favorite examples of such:

|

| You heard it here first, folks: quotes aren't results. |

4. It can make you laugh or cry - choose to laugh.

I think this is just good advice for life. If this is part of your job, you can't let every bad review get you down. It helps to find some humor in the situation. For instance, as I work on my revision, I use the Word comments feature to show where a change is needed based on a reviewer comment. I occasionally put snarky comments alongside. Obviously those are deleted before I resubmit. But they make me laugh so I keep doing it.

5. Some of the feedback will be helpful and some will be completely ridiculous.

I tried to find my very first peer review and sadly, couldn't locate it. But one comment was so ridiculous, I remember it (maybe not verbatim). The reviewer stated that my study contained serious methodological flaws. Said reviewer could not readily identify these flaws but knew they were there because I "did not confirm my hypothesis."

Okay, to repeat something I tell my Research Methods students again and again: You do not critique the validity of a study based on its findings. You critique based on its methods.

As for the part that implies a good study should confirm its hypothesis - yeah, I'm not even going into what's wrong with that. So I'll just sum up what would ultimately end up being a multi-part blog post rant with some choice phrases: scientific method, type I error, the tentative nature of scientific findings.

6. There will be times, when reading a review, that you will wonder, "Did they even read the **** paper?!"

And you know what, maybe they didn't. Or maybe they skimmed it. Or maybe they did actually read it, and weren't able to absorb this information after one read. Because most of your readers if the paper is published will probably also just read it once and absorb what they can from it, feedback from this kind of reviewer is actually very helpful.

7. It can make you a better writer, if you let it.

When you're writing or talking about something you know a lot about, you sometimes take certain information for granted. Most of the time, when we communicate about a study we're conducting, it is with other people involved in the study. But when you write up your findings for publication, you're addressing a new audience: people who know nothing about what you did and who may know very little about a topic.

Receiving peer reviews help highlight that information you may have taken for granted. Over time, with experience at revising based on reviews, you get better about thinking of what information your reader would want to know. This makes you a better writer, at least in terms of scientific writing.

8. Any given review is just one person's opinion.

So don't let bad reviews get you down. At the end of the day, it's just one person's opinion. My favorite example:

This comment was about one of my most cited papers. I receive emails 1-2 times a month and requests on Research Gate 2-3 times a month asking for a copy. I like to think its contribution is more than just small.

But this goes both ways - any good review is also just one person's opinion. You shouldn't let bad reviews eat away at you. But at the same time, you can't simply ignore bad reviews and listen only to the good ones, because the good ones are opinions too, opinions that might not be shared by very many people. Take what you can from both.

Wednesday, August 19, 2015

Peer Into the World of Academic Publishing: Part 1

Writing research articles is a big part of my job, as well as a necessity in getting grant funding and/or certain jobs. A big part of writing articles is dealing with reviewers and determining how to revise an article based on those reviews. In fact, at the moment, I'm working on two revisions.

But it occurred to me that the world of peer-review publishing may seem a bit foreign to some, so I thought I'd write today about what peer review is, why it's so important, and what it's not. This is going to be a long post, so I'll be dividing it up. I'm also planning to share (in a not-too-distant future post) some of my favorite reviews, many of which are - believe it or not - pretty demoralizing. Perhaps one of the best things I've developed as part of this job is thicker skin when it comes to criticism of my writing and understanding of research.

Because both of those things have been challenged in this crazy research world. That's right, I've been called a bad writer and a poor methodologist. Sometimes for the same article that another reviewer calls well-written and meticulously planned. Everyone's a critic.

No, really - in the world of academic publishing, everyone is a critic, or at least, has the potential to be. (Dying for some funny reviews now? Check out Shit My Reviewers Say.)

In scientific fields, especially careers funded by grants as well as at certain types of universities, there is an expectation to publish research results. We in academia/research call this "publish or perish." And it can be pretty brutal.

For the moment, we'll skip over the need to come up with research ideas that grant reviewers like in order to do research to begin with, and move straight to writing research up for publication. After research is complete (and yes, sometimes while research is in progress, if we have early results to share), we begin writing scientific articles that talk about the research we did, how we did it, and what we found. At some point during the writing, we begin thinking about what journal we think would be most likely to publish our paper.

And if you're like me, you have a second, third, even fourth option, because reviewers.

After the paper is complete, we submit it to our first-choice journal. It goes to an editor, who usually reads over the article to make sure it's the type of paper they would want to publish, and also to ensure the article is in the right format, and often to make sure it contains no author information, if the journal uses double-blind peer review. Then he or she selects reviewers to contact about reviewing the paper.

As I said, at some journals, the reviewer(s) receive the paper with no author information. We call this double-blind because the reviewers don't know who wrote the article and the authors don't know who reviewed the article. This allows us all to remain cordial with each other at conference happy hours.

It also, supposedly, makes academic publishing an equal playing field. The world-renowned scholar in X does not get to use her name to get published, and the brand new grad student studying X does not have to worry that no one in the field has heard of him (yet).

The reviewers read the article and make their recommendations to the editor of whether it should be 1) accepted without revision (HAPPY DANCE!), 2) accepted pending revision (Happy dance!), 3) revise and resubmit without guarantee of acceptance (Um, happy dance?), or 4) reject (...).

Good reviewers will also provide some feedback to the authors, letting them know why the reviewer made this decision and how the paper could be improved, whether it is resubmitted to the same journal or submitted somewhere else.

But nothing says a reviewer has to give feedback. And some are very terse. I have received two reviews, I suspect from the same person (different articles, but submitted to the same journal a year or so apart), that totaled 4 sentences. In one of these cases, the paper was invited for a revision, so I had to make what I could of those 4 sentences, but base most of my revision on reviewer 2, who was one of the most awesome reviewers I've ever had.